Can AI Replace Therapy? Why Mental Health Care Still Needs a Human Relationship

There’s a reason people are turning to AI for mental health support. It’s fast. It responds instantly with what feels like thoughtful guidance. You don’t have to explain yourself. You don’t have to wonder if you’re “too much” for someone. You can just type, and something meets you there.

But it goes deeper than that. For many people, access to therapy is not simple. In some areas, there are long waitlists or no providers at all. Evening and weekend availability can be limited. Cost can be a barrier. And for some, the last time they tried therapy, it wasn’t a good experience. They didn’t feel a real connection. They didn’t feel understood.

In that context, AI doesn’t just feel convenient. It can feel like the only option. And when something responds immediately and reflects your experience, it can create a real sense of relief.

But before we start calling it therapy, it’s worth pausing. What AI is doing well, and what therapy is designed to do, are not the same thing.

RECOGNIZING A CONDITION VS. PROVIDING GUIDANCE

AI is incredibly effective at validating what you say and offering potential solutions to the problem in front of you. It can help you reframe a thought, organize your feelings, or give you language for something that has been hard to articulate.

In fact, early research on AI-driven mental health tools shows that people can experience short-term reductions in anxiety and distress when engaging with chatbot-style support, particularly in studies examining CBT-based chatbots (Fitzpatrick et al., 2017; Inkster et al., 2018).

That makes sense. When something responds immediately, reflects your experience, and offers direction, it can create a real sense of relief. But that relief is often temporary, because it is working at the level of what is presented, not the pattern underneath it.

This limitation becomes more visible outside of controlled settings, where AI is no longer interpreting structured inputs, but real people.

In a study published in Nature Medicine, AI systems were able to identify medical conditions with about 95% accuracy in structured exam environments. But when those same systems were asked to interpret real patient input and determine appropriate next steps, accuracy dropped to roughly 34.5% (Bean et al., 2026).

That gap is not subtle.

RESPONDING IS NOT UNDERSTANDING

The issue is not simply whether AI can process information. It is whether it can interpret human context well enough to guide real-world decisions.

As outlined in emerging critiques of AI mental health tools, many of these systems are designed around a specific model of how humans function. They treat thoughts as problems to solve, emotions as things to regulate quickly, and distress as something that can be reduced through the right input and output (Fullam, 2025).

You say something hard, it responds instantly, it validates you, and it offers a reframe or a solution. On the surface, it looks like progress. But what’s happening is much smaller than what therapy is designed to do.

Real change is not just about addressing the thought in front of you. It’s about understanding the pattern underneath it. It’s about recognizing how that pattern shows up across your relationships, your decisions, your reactions, and your life.

AI can notice patterns in what you share. But you can always start over, shift the story, or leave things out. A therapist remembers and brings the pattern back, so it doesn’t disappear just because the conversation moves on.

THERAPY IS A RELATIONSHIP, NOT A TECHNIQUE

As we begin to rely more on AI for mental health support, we risk unintentionally redefining what therapy is. It starts to look like a set of techniques. A place to vent and receive validation. A tool that helps you feel better in the moment.

In contrast, therapy is a relationship. It is the process of being seen, challenged, understood, and responded to by someone who is actively tracking you over time. Someone who notices the shifts, the defenses, the contradictions, and the growth. Someone who is not just responding to your words, but to you.

That difference becomes clearer when looking at how AI systems engage with people.

Research from Brown University has already started to outline some of the risks. In their study of AI mental health chatbots, researchers found that these systems can:

- Reinforce harmful beliefs instead of challenging them

- Default to one-size-fits-all responses that don’t reflect a person’s lived experience

- Use phrases like “I understand” in ways that create the impression of empathy without actually understanding

In some cases, the gaps were more serious. Systems failed to respond appropriately in crisis situations, including not directing users to support when suicidal thoughts were expressed (Brown University, 2025).

This is how these systems tend to engage in practice.

THERAPY IS NOT JUST ABOUT WHAT IS SAID

This is the gap. Therapy extends beyond the words themselves. It is about what happens between two people. How you show up, how you avoid, how you protect, and how you connect.

That process cannot be replicated by something that is only responding to inputs.

When you remove that, you don’t just remove a “nice to have” element. You remove the mechanism that actually creates change.

MORE CONCERNS BEING RAISED

Recent reporting is beginning to highlight additional concerns. Unlike clinical care, these systems also operate without the safeguards that are standard in therapeutic settings. Chatbots do not conduct structured risk assessments, and individuals experiencing suicidal thoughts or severe distress can engage with them for extended periods without escalation to human support or intervention (The Guardian, 2026).

In more serious cases, there have been documented situations where AI interactions failed to appropriately respond to high-risk scenarios or contributed to worsening mental health outcomes (American Psychiatric Association, 2024). Not because of bad intent, but because there is no ethical responsibility or human awareness on the other side of the conversation.

In a health advisory, the American Psychological Association cautioned that generative AI chatbots and wellness apps should not replace care from a qualified mental health professional, noting that their ability to safely guide individuals, especially in high-risk situations, remains unreliable (American Psychological Association, 2025).

THE RISK OF REDEFINING THERAPY

If people begin to believe that therapy is simply validation, advice, and quick emotional relief, they may never seek out the kind of work that leads to lasting change.

They may feel better temporarily but stay stuck in the same patterns. They may be given solutions to the problem they present, while the deeper pattern driving those problems goes completely untouched.

Over time, this doesn’t just stall progress. It can reinforce the very things someone is trying to break out of.

AI HAS A ROLE. THERAPY HAS A RESPONSIBILITY.

AI can be a powerful tool. Used well, it can help someone organize their thoughts or take a first step toward reaching out for support. Likewise, therapists can use it to reduce administrative burden so more time can be spent focused on the person, not the process.

AI can complement care. There is real value in that. But it is not therapy.

Therapy is not just about what is said. It is about what is experienced, repeated, and transformed within a relationship. And that cannot be automated.

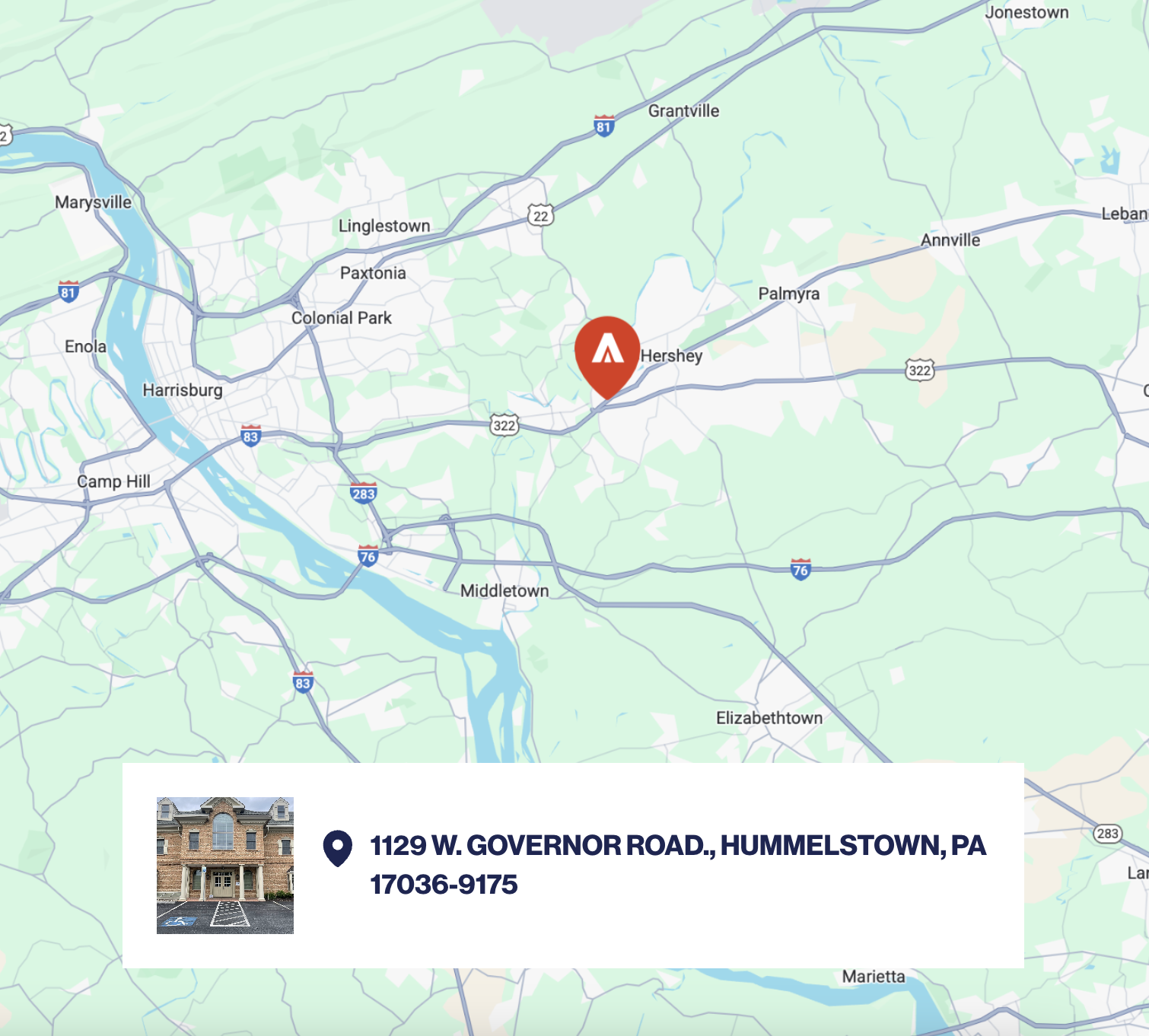

At Sanare, this is exactly why we do the work the way we do. Therapy feels different when clinicians bring their authentic selves into the room and respond to what is happening with creativity. Connection forms with others who have faced similar struggles, and that shared experience allows people to see their patterns more clearly and begin responding to them differently.

This is what makes therapy more than a conversation. It carries a responsibility to do more than respond. It has to recognize when something is not improving, adjust the approach, and step in when someone needs more support than they can manage on their own.

That is why it cannot be replaced.

AI can respond. What it cannot do is take responsibility for what happens next.

REFERENCES

American Psychological Association. (2025). Health advisory on AI chatbots and wellness apps. https://www.apa.org/topics/artificial-intelligence-machine-learning/health-advisory-chatbots-wellness-apps

Associated Press. (2025). Study says AI chatbots need to fix suicide response, as family sues over ChatGPT role in boy’s death. https://apnews.com/article/da00880b1e1577ac332ab1752e41225b

Bean, A. M., Payne, R. E., Parsons, G., et al. (2026). Reliability of LLMs as medical assistants for the general public: A randomized preregistered study. Nature Medicine, 32, 609–615. https://doi.org/10.1038/s41591-025-04074-y

Brown University. (2025). Study reveals ethical risks in using AI chatbots for mental health support. https://www.brown.edu/news/2025-10-21/ai-mental-health-ethics

Draelos, R. L., Afreen, S., Blasko, B., et al. (2026). Large language models provide unsafe answers to patient-posed medical questions. npj Digital Medicine, 9, 241. https://doi.org/10.1038/s41746-026-02428-5

Fitzpatrick, K. K., Darcy, A., & Vierhile, M. (2017). Delivering cognitive behavior therapy to young adults with symptoms of depression and anxiety using a fully automated conversational agent (Woebot): A randomized controlled trial. JMIR Mental Health. https://doi.org/10.2196/mental.7785

Fullam, E. (2025). Chatbot therapy: A critical analysis of AI mental health treatment. Routledge. https://doi.org/10.4324/9781003586425

Giorgi, S., Isman, K., Liu, T., et al. (2024). Evaluating generative AI responses to real-world drug-related questions. Psychiatry Research. https://doi.org/10.1016/j.psychres.2024.116058

Inkster, B., Sarda, S., & Subramanian, V. (2018). An empathy-driven, conversational artificial intelligence agent (Wysa) for digital mental well-being: Real-world data evaluation. JMIR mHealth and uHealth. https://doi.org/10.2196/12106

Pew Research Center. (2025). Teens, social media and AI chatbots 2025. https://www.pewresearch.org/internet/2025/12/09/teens-social-media-and-ai-chatbots-2025/

RAND Corporation. (2025). One in eight adolescents and young adults use AI chatbots for mental health advice. https://www.rand.org/news/press/2025/11/one-in-eight-adolescents-and-young-adults-use-ai-chatbots.html

The Guardian. (2026). Unregulated chatbots are putting lives at risk. https://www.theguardian.com/technology/2026/apr/01/unregulated-chatbots-are-putting-lives-at-risk

.jpg)